Two climate risk providers assess the same building, under the same emissions scenario, using the same generation of climate models. One classifies it as highly exposed to flooding. The other puts it at zero risk. This is not an outlier finding from a single comparison. It is the documented, reproducible norm across all major benchmarking exercises to date.

Hain, Kölbel and Leippold compared six physical risk scores for several hundred firms under a high-emissions scenario at the 2050 horizon. Correlations between the different scores were weak across the board, with two model-based providers showing a statistically significant negative correlation, meaning they held essentially opposite views on which firms face the greatest physical risk. Agreement on which firms belonged in the top risk category was close to zero.

The GARP Risk Institute ran the most operationally relevant test: 13 vendors assessed the same 100 properties across the UK, continental Europe, the US and Asia. Results diverged across all hazard types. One vendor placed a well-known Boston building on a road with a similar name in Atlanta, 1,507 kilometres away, because of an error in matching the address to its location on a map.

The Bank of England’s Climate Biennial Exploratory Scenario found that some participating banks estimated loss rates for the same borrower at 10 times those of other banks. A live supervisory stress test, with real institutions, on real loan books.

The instinct is to blame the climate models, but the evidence points elsewhere.

The translation chain

Between what an Earth system model (a computer simulation of the planet’s climate) produces and the number that determines your insurance premium, your mortgage rate, whether your property holds its value, or whether your insurer stays in your state, there are six layers of methodological choice. Each layer requires assumptions, each assumption amplifies the next, and each layer introduces its own source of disagreement that carries through to everything downstream.

Climate model selection. The current generation of global climate models (known as CMIP6, the sixth round of a coordinated international modelling effort) contains around 50 to 60 models from roughly 30 research centres worldwide. A significant fraction of these run “hot,” projecting higher warming than observations and expert assessments support. Whether a provider filters these out, and how, can meaningfully shift regional temperature projections before anything else in the chain happens. Hawkins and Sutton showed that disagreement between climate models is the dominant source of uncertainty in temperature projections out to around 2050, larger than the uncertainty from which emissions pathway the world follows. For rainfall projections, model disagreement dominates at all time horizons.

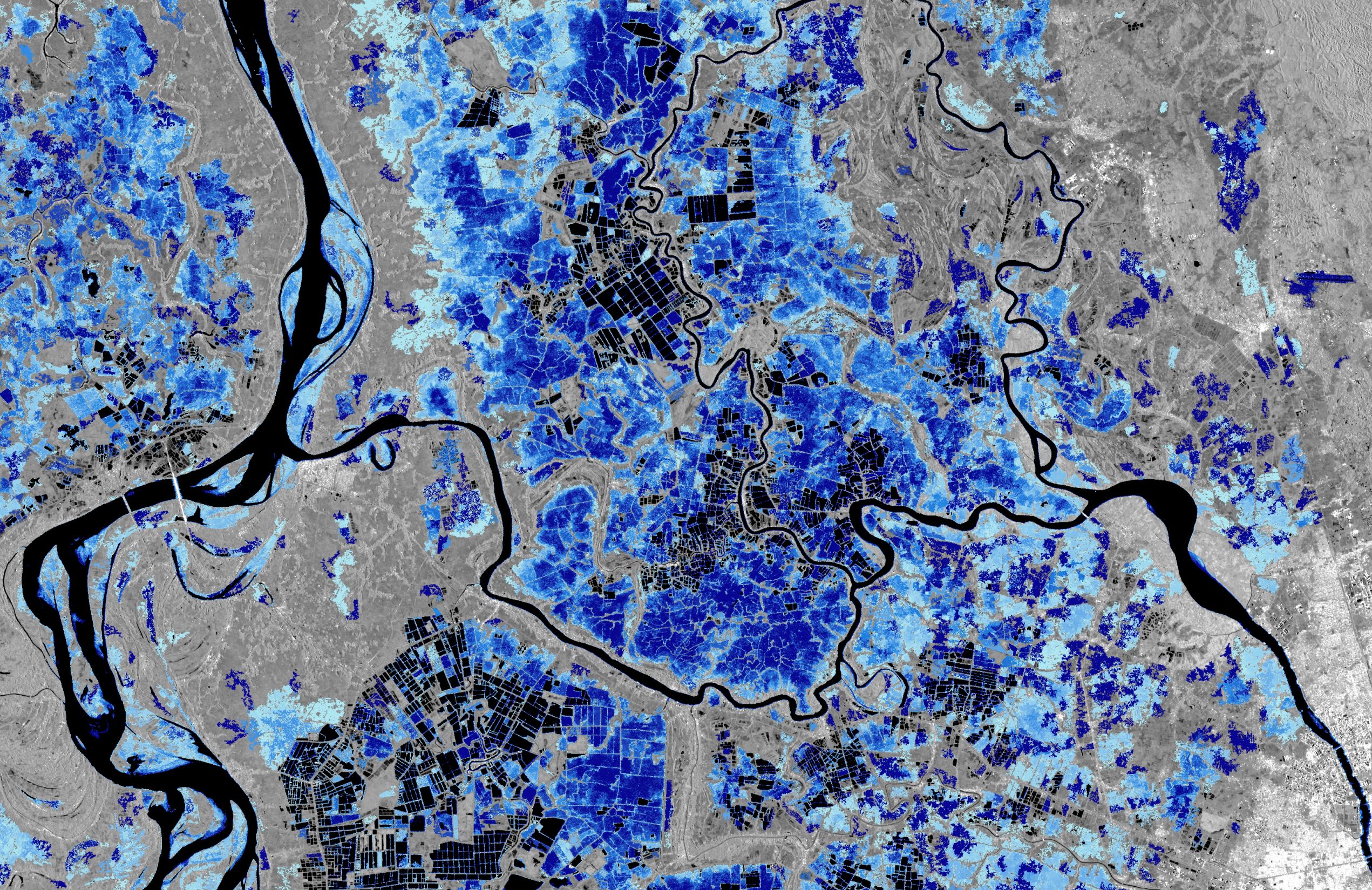

Downscaling. Standard climate model output arrives at roughly 100 km resolution, meaning each grid cell covers an area larger than most cities. Financial decisions happen at the level of a building, a field, a stretch of coastline. Providers use various techniques to translate coarse global projections into local detail. Statistical methods are cheap but can miss extreme events, particularly intense localised storms. Physics-based methods are more reliable but orders-of-magnitude more expensive, and the highest-resolution versions (capable of simulating individual thunderstorms) remain rare. The choice of method determines whether a provider can even distinguish one side of a river from the other.

Hazard modelling. Each type of climate hazard, whether flood, wind, heat, fire, or drought, requires its own model layered on top of climate projections. For flood alone, catastrophe models from Verisk, Moody’s RMS, KatRisk, Karen Clark & Company and First Street diverge substantially. Dusseau et al. found that Karen Clark & Company produces Florida flood damage estimates roughly 13 times lower than competitors, while First Street’s estimates run higher than others nationally. Schubert, Mach and Sanders compared First Street’s national flood model with UC Irvine’s PRIMo-Drain model for Los Angeles County: the two agreed on which properties face significant flooding risk only 24% of the time. CarbonPlan compared Jupiter Intelligence and XDI on 128 California locations for wildfire and found 12% agreement on where risk would increase by 2100.

Exposure data. Identifying which assets sit within a hazard zone requires location databases, ranging from open sources like OpenStreetMap to proprietary systems that cover millions of buildings. Bressan et al. showed that investor losses are underestimated by up to 70% when providers use industry averages instead of pinpointing individual buildings, and by up to 82% when extreme weather events are excluded from the analysis. The GARP study’s 1,507 km address error is an extreme case, but the underlying problem is structural: most financial portfolios do not contain precise coordinates for every asset they hold.

Vulnerability and damage functions. This is where the evidence points to the single largest source of disagreement in the entire chain. The standard approach uses “depth-damage functions,” essentially lookup tables that estimate the percentage of a building’s value destroyed at a given flood depth. Wing et al. analysed over 2 million US flood insurance claims and found that real-world flood losses do not follow a simple “deeper water means more damage” pattern, contradicting the foundational assumption of every standard damage table in use. De Moel et al. showed that the choice of damage model alone produces a factor-of-four variation in loss estimates; adding uncertainty about how deep the water actually gets pushes the total range to a factor of five to six. Scorzini and Frank found that applying damage functions developed for one region to another, without recalibration, yields errors of 50 to 360% (!).

At the macroeconomic level, Pindyck was blunt: the functions used to estimate how much economic damage a given amount of warming will cause are “not based on any economic (or other) theory” and their developers “can do little more than make up functional forms and corresponding parameter values.” In late 2024, the NGFS (Network for Greening the Financial System, a group of central banks and financial regulators that sets the reference scenarios for climate stress testing worldwide) replaced the damage function in its toolkit with one based on a paper by Kotz et al. published in Nature. The resulting loss estimates for its “current policies” scenario, meaning what happens if the world keeps doing roughly what it’s doing now, jumped to roughly 14% of GDP by 2050 and 33% by 2100. Those numbers were themselves contested within months: Nature flagged the paper over data reliability concerns, and Bearpark et al. found that a data error in a single country had inflated the estimates by approximately a factor of three. An independent reanalysis by the Bank Policy Institute produced damage estimates that were 80% lower by 2100. The single most consequential methodological input in the reference framework that every central bank climate stress test runs on proved unstable to a single data point in a single country.

Financial aggregation. Converting physical damage estimates into portfolio-level numbers introduces additional choices about how to discount future losses, which time horizon to use, and how to account for sector differences. Dietz et al. estimated the total value of global financial assets at risk from climate change at 1.8% expected loss under a business-as-usual pathway, but with a worst-case (99th percentile) of 16.9%, a ninefold range depending on how heavily tail risks are weighted. This layer is arguably the least studied, in part because providers treat their aggregation methods as proprietary and rarely disclose them in enough detail for comparison. The choices made here determine whether a pension fund sees climate risk as a rounding error or a material threat to its members’ retirement savings, and two funds using different providers can reach opposite conclusions about the same underlying physical reality.

The overall effect is multiplicative, not additive: uncertainty at each layer doesn’t just add to the total, it multiplies through. If you take even conservative estimates of the spread at each layer, a factor-of-two in climate projections, a factor-of-two in downscaling, a factor-of-four in damage functions, a factor-of-1.5 in exposure data, the compound result is a potential factor-of-24 spread in asset-level loss estimates. That is a rough illustration of how the uncertainty propagates, not a measured result, but it is consistent with the Bank of England’s finding of ten-fold divergence between participating banks.

Where the chain breaks

I spent years building climate-adaptation products, watching satellite-derived flood data integrate into insurance workflows. The science was sound. The imagery was accurate. And the moment the data crossed into a damage estimate or a risk score, the assumptions underneath it were no longer ours to control, no longer visible to the end user, and no longer traceable back to the physical observation that started the chain.

This is not a new pattern if you come from atmospheric physics. Compounding uncertainty is how climate science has always worked: you feed starting conditions into a model, and at every step the range of possible outcomes expands. The discipline has spent decades developing rigorous frameworks for tracking and communicating that expansion, from running dozens of models in parallel to see where they agree and disagree, to reporting confidence ranges alongside every result. The financial translation chain reproduces the same pattern of compounding uncertainty, but without any framework for tracking it. In climate science, you are trained to carry your uncertainty forward and report it alongside your result. In financial risk products, the uncertainty is stripped out at each layer, and the result is delivered as a single number. The compounding still happens. It just becomes invisible.

CMIP7, the next generation of coordinated global climate modelling, is arriving. First model data began flowing from research centres in 2025, designed in part to feed the next round of international climate assessment (IPCC AR7, expected from 2028). The improvements are real: 60 groups of variables explicitly designed for impact modelling, better data on what happens within a single day (critical for modelling storms and heatwaves), a stronger push toward correcting known model biases from the design stage, new scenarios that explore what happens if temperatures temporarily overshoot targets before coming back down (relevant for financial stress testing), and 13 application areas covering energy, agriculture, water, health and finance. All of which improves the first layer of a six-layer chain, while leaving the other five untouched.

Standard model resolution remains at approximately 100 km. The process of translating that into local detail remains external and takes years. Damage functions remain, in Pindyck’s phrase, “made up.” Hazard models remain proprietary and are rarely compared. Location databases remain fragmented. Financial aggregation methods remain provider-specific. None of these is within CMIP7’s scope, and none were within CMIP6’s scope either.

The Ruane et al. paper laying out what CMIP7 aims to deliver for impact and adaptation users is explicit about the limits: the flow from global climate model outputs to real-world adaptation decisions is complex, non-linear, and full of places where information enters or exits the pipeline. Some users skip steps entirely. Others rely on simplified, computationally cheaper substitutes for full climate models. The pipeline is not a closed system, and improving one end does not automatically improve the other.

Bingler et al. demonstrated this for the risk of transitioning to a low-carbon economy: the methodology adopted by a provider affects the estimated risk value more than the chosen climate scenario. The same logic applies to physical risk. Two providers can use the same climate model and the same emissions pathway and still produce materially different financial loss estimates because they differ in how they translate global projections into local detail, which flood model they use, which damage tables they apply, or which location database they rely on.

What getting this wrong costs

The translation chain’s failures are not academic. Swiss Re’s Resilience Index shows that only 25.7% of global natural catastrophe exposure is protected by insurance. Three-quarters are unprotected. The gap between what is insured and what would need to be insured to fully cover the risk reached $1.83 trillion in 2023. Economic losses from natural disasters hit $318 billion in 2024, with only 43% covered by insurance. This was the fifth consecutive year insured losses exceeded $100 billion (2025 made it six), growing at 5 to 7% annually in real terms.

The divergence in risk scores directly contributes to this gap. If a bank’s climate risk provider says a property is low-risk, the mortgage gets written. If the insurer’s provider disagrees, the premium becomes unaffordable, or coverage becomes unavailable. If neither score reflects actual flood exposure, the loss falls on the homeowner and ultimately on the public balance sheet.

In November 2025, Zillow removed climate risk scores from over a million property listings after pressure from the California Regional Multiple Listing Service, which questioned why properties that “hadn’t flooded in 40 to 50 years” were flagged as high risk. Real estate agents complained that the scores were hurting sales. First Street, which provided the data, defended its models as peer-reviewed and validated, noting that during the LA wildfire, its maps identified over 90% of the homes that burned as being at severe or extreme risk. Both positions are reasonable on their own terms. An area that hasn’t flooded in decades may still face rising flood probability under a changing climate, and a forward-looking model may correctly identify that shift. The reason the two positions produce a standoff rather than a resolution is that the translation chain connecting climate projections to property-level risk scores involves assumptions that neither the listing service nor the homebuyer can see or interrogate. The family buying the house is standing at the end of a six-layer chain of choices about which climate models to trust, how to translate a 100 km grid to their street, which flood model to run, whether their building was correctly located on a map, what damage formula was applied, and how all of that was compressed into a single score. They see the score. They don’t see any of the layers that produced it, and they have no way to know whether a different provider would have told them something completely different.

This is not an American problem. In Europe, the same six-layer chain reaches households through different institutional channels, and in some ways, the consequences are worse because most European homebuyers receive no climate risk information at all before purchase. There is no European equivalent to Zillow that displays risk scores on property listings. The buyer finds out after the flood.

In July 2021, unprecedented rainfall caused the river Ahr in western Germany to burst its banks. Over 190 people died in Germany alone, 134 of them in the Ahr valley. Total economic losses reached an estimated 33 billion euros, of which less than a quarter was insured. Only around 50% of German homeowners carry natural hazard insurance, with take-up as low as 37% in the worst-hit state, Rheinland-Pfalz. The most striking finding came from the fatality analysis: 75% of the deaths occurred outside the officially mapped hazard zones. The hazard map itself, the output of the translation chain, had significantly underestimated the flood extent because it was built on river flow records that began in 1947 and did not include an event of this magnitude. The six layers did not just produce a divergent estimate. They produced a map that told people they were safe in a place where they drowned.

The contrast across European countries is stark. France bundles natural catastrophe cover into every property insurance policy through its Cat Nat system, achieving roughly 98% penetration, but the pricing is politically managed rather than risk-based, which means the translation chain’s output does not reach the policyholder as a price signal. Germany is debating mandatory natural hazard insurance in the wake of the Ahr Valley disaster, but has not yet implemented it. In much of Southern and Eastern Europe, flood insurance penetration is even lower. The ECB now expects banks to incorporate physical climate risk into their lending decisions, and the European Banking Authority has issued guidelines, but the data infrastructure underneath those expectations is the same fragmented six-layer chain that produces order-of-magnitude disagreements between providers. In Finland, standard home insurance does not cover flood damage at all, so the risk numbers the chain produces have nowhere to go. In Sweden and Norway, flood coverage comes bundled into every home insurance policy, but everyone pays roughly the same rate regardless of how exposed their property actually is, so the chain’s risk estimates don’t change what anyone pays.

The EU Floods Directive sits at the intersection of all of this. It requires member states to produce flood hazard maps on six-year cycles but does not specify data sources, require satellite observations, mandate property-level resolution, or bind risk assessments to building permits or lending decisions. The EU’s own Copernicus Emergency Management Service already runs daily flood forecasts up to 15 days ahead and near-real-time flood maps from radar satellite imagery. The observation capability exists at one end of the chain. The regulation does not connect it to any of the six layers that would need to use it. And the Ahr Valley showed what happens when the map at the end of the chain is built on data that omits the event that actually kills people.

Both ends, no middle

At the science end of the chain, CMIP7 is making climate model output more decision-relevant. At the observation end, satellite flood monitoring, insurance products that pay out automatically when a physical threshold is crossed, and forward-looking catastrophe models are making real-time risk assessment operationally possible. Both ends are improving, and neither is where the problem lies.

The middle of the chain, where models translate physics into money, remains structurally unowned. No institution governs the comparability of damage functions. No regulatory framework requires providers to disclose which methods, models, databases or assumptions they use. No equivalent of the coordinated international comparison that exists for climate models exists for the downstream pipeline. CarbonPlan has called for exactly this: a nationwide, multi-hazard, asset-level comparison of private climate risk companies. It has not happened.

There is an institutional reason it hasn’t happened, and it goes beyond technical difficulty. The six-layer chain does not just produce disagreement; it produces plausible deniability. Each layer’s assumptions become invisible the moment the output enters the next layer’s input. The bank points to the risk score. The risk provider points to the hazard model. The hazard modeller points to the climate projection. The climate modeller points to the emissions scenario. Nobody owns the full chain of assumptions, and nobody is accountable for the compound result. The number exists not because it is accurate but because it needs to exist, for a regulatory filing, a stress test, a lending decision. The precision of the output absorbs the institutional pressure that would otherwise push for an understanding of the inputs’ uncertainty. This is the same pattern I have written about in the capital chain that connects citizens and pensioners through fund managers to venture capitalists to founders to physical reality: at every link, someone is making a decision based on a number produced by the link before them, and nobody owns the connection between the money and the physics.

Commercial climate risk products built on CMIP7 data are unlikely to reach financial end-users before 2029 to 2030. During the transition, some providers will use the old models, some the new, some a mix, adding a new source of disagreement between providers. The change in scenario design breaks continuity with all existing financial analyses built on the previous generation.

The leverage in this chain does not sit where most of the attention goes. Better climate models matter, but the science is already ahead of the infrastructure that translates it. The layers where the largest disagreement lives, damage functions, hazard models, and exposure resolution, are also the layers with the least transparency, the least standardisation, and the least institutional accountability. That is where the market structure could shift, and where it has the least incentive to do so.

The numbers in your risk report are six layers downstream from reality. So is the score that priced your insurance, the assessment that shaped your mortgage, and the rating that told you your house was safe. Most of the disagreement lies in the layers nobody is comparing, and the market’s structure has no incentive to make that visible.

References

Bearpark, T., Hogan, D. and Hsiang, S. (2025). Data anomalies and the economic commitment of climate change. Nature. https://www.nature.com/articles/s41586-025-09320-4

Bingler, J.A., Colesanti Senni, C. and Monnin, P. (2022). Understand what you measure: Where climate transition risk metrics converge and why they diverge. Finance Research Letters, 50. https://doi.org/10.1016/j.frl.2022.103265

Bank of England (2022). Results of the 2021 Climate Biennial Exploratory Scenario (CBES). https://www.bankofengland.co.uk/stress-testing/2022/results-of-the-2021-climate-biennial-exploratory-scenario

Bank Policy Institute (2024). The NGFS’s new climate damage function: A flawed analysis with massive economic consequences. https://bpi.com/the-ngfss-new-climate-damage-function-a-flawed-analysis-with-massive-economic-consequences/

Bressan, G., Duranovic, A., Monasterolo, I., Murgoci, A. and Pointner, W. (2024). Asset-level assessment of climate physical risk matters for adaptation finance. Nature Communications, 15, 5371. https://doi.org/10.1038/s41467-024-49957-9

CarbonPlan (2023). Climate risk companies don’t always agree. https://carbonplan.org/research/climate-risk-comparison

De Moel, H., van Alphen, J. and Aerts, J.C.J.H. (2010). Effect of uncertainty in land use, damage models and inundation depth on flood damage estimates. Natural Hazards, 58, 407-425. https://doi.org/10.1007/s11069-010-9675-6

Dietz, S., Bowen, A., Dixon, C. and Gradwell, P. (2016). Climate value at risk of global financial assets. Nature Climate Change, 6, 676-679. https://doi.org/10.1038/nclimate2972

Dunne, J.P. et al. (2025). An evolving Coupled Model Intercomparison Project phase 7 (CMIP7) and Fast Track in support of future climate assessment. Geoscientific Model Development, 18, 6671-6700. https://doi.org/10.5194/gmd-18-6671-2025

Dusseau, R. et al. (2026). Validation and comparison of U.S. loss estimates from catastrophe flood models. Journal of Catastrophe Risk and Resilience. https://journalofcrr.com/research/04-01-dusseau-et-al/

GARP Risk Institute / UK Climate Financial Risk Forum (2024). Comparing climate risk vendors: A user’s guide to physical risk assessments. https://www.garp.org/risk-intelligence/sustainability-climate/comparing-climate-risk-251023

Hain, L.I., Kolbel, J.F. and Leippold, M. (2022). Let’s get physical: Comparing metrics of physical climate risk. Finance Research Letters, 46, 102406. https://doi.org/10.1016/j.frl.2021.102406

Hawkins, E. and Sutton, R. (2009). The potential to narrow uncertainty in regional climate predictions. Bulletin of the American Meteorological Society, 90(8), 1095-1107. https://doi.org/10.1175/2009BAMS2607.1

Kalkuhl, M. and Wenz, L. (2020). The impact of climate conditions on economic production. Evidence from a global panel of regions. Journal of Environmental Economics and Management, 103, 102360. https://doi.org/10.1016/j.jeem.2020.102360

Kotz, M., Levermann, A. and Wenz, L. (2024). The economic commitment of climate change. Nature, 628, 551-557. https://doi.org/10.1038/s41586-024-07219-0

NGFS (2024). NGFS Climate Scenarios for central banks and supervisors, Phase V. https://www.ngfs.net/en/publications-and-statistics/publications/ngfs-climate-scenarios-central-banks-and-supervisors-phase-v

Pindyck, R.S. (2013). Climate change policy: What do the models tell us? Journal of Economic Literature, 51(3), 860-872. https://doi.org/10.1257/jel.51.3.860

Ruane, A.C. et al. (2025). CMIP7 data request: Impacts and adaptation priorities and opportunities. Geoscientific Model Development, 18, 9497-9540. https://doi.org/10.5194/gmd-18-9497-2025

Rhein, B. and Kreibich, H. (2025). Causes of the exceptionally high number of fatalities in the Ahr valley, Germany, during the 2021 flood. Natural Hazards and Earth System Sciences, 25, 581-589. https://doi.org/10.5194/nhess-25-581-2025

Schubert, J.E., Mach, K.J. and Sanders, B.F. (2024). National-scale flood hazard data unfit for urban risk management. Earth’s Future, 12, e2024EF004549. https://doi.org/10.1029/2024EF004549

Scorzini, A.R. and Frank, E. (2017). Flood damage curves: new insights from the 2010 flood in Veneto, Italy. Journal of Flood Risk Management, 10(3), 381-392. https://doi.org/10.1111/jfr3.12163

Swiss Re Institute (2024). sigma Resilience Index 2024: Encouraging resilience gains, but more is needed. https://www.swissre.com/institute/research/sigma-research/natural-catastrophe-insurance-global-resilience-index-2024.html

Swiss Re Institute (2025). sigma 1/2025: Natural catastrophes: insured losses on trend to USD 145 billion in 2025. https://www.swissre.com/institute/research/sigma-research/sigma-2025-01-natural-catastrophes-trend.html

Wing, O.E.J. et al. (2020). New insights into US flood vulnerability revealed from flood insurance big data. Nature Communications, 11, 1444. https://doi.org/10.1038/s41467-020-15264-2

Zillow climate risk score removal: De Chant, T. (2025). Zillow drops climate risk scores after agents complained of lost sales. TechCrunch, 1 December. https://techcrunch.com/2025/12/01/zillow-drops-climate-risk-scores-after-agents-complained-of-lost-sales/